搜狗已为您找到约12,068条相关结果

在线robots.txt文件生成工具 - UU在线工具

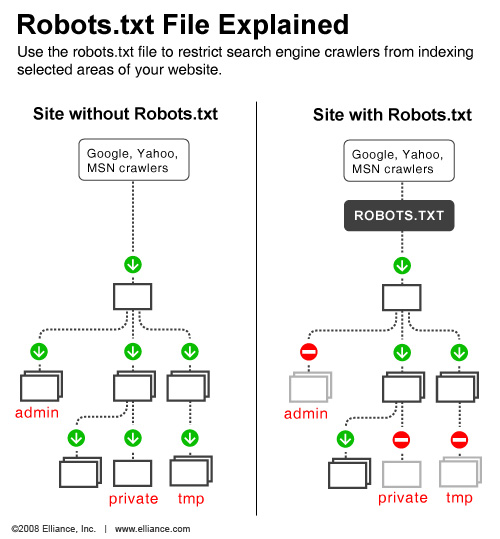

robots.txt详解[通俗易懂] - GaoYanbing - 博客园

《Robots.txt和meta robots标签:控制国际网站爬虫_页面_搜索引擎

Apache的robots.txt文件如何配置-编程学习网

robots.txt 文件详解 - passport_daizi的博客 - CSDN博客

- 来自:passport_daizi

- robots.txt</span> </h3> <p> </p> <div class="text_pic layoutright layoutParagraphBefore layoutTextAfter" style="width:220px;"> <a class="nslog:1200 card-pic-handle" title="...

不可不知的robots.txt文件-CSDN博客

- 来自:weixin_30662011

- Robots.txt file from </em><em>http://www.seovip.cn</em><br><em># All robots will spider the domain</em></p> <p><em>User-agent: *<br> Disallow:</em></p> <p>以上文本表达的...

爬虫给力技巧:robots.txt快速抓取网站

爬虫给力技巧:robots.txt快速抓取网站